Si te estás preguntando cómo monitorear el precio de los alimentos mediante Web Scraping, nos gustaría comentar que esta es una de las labores de mayor relevancia que realizamos como Socios Tecnológicos dentro del ámbito humanitario, debido al alto impacto que genera en el presupuesto familiar el incremento del precio de los alimentos que conforman la canasta básica alimentaria.

For example, for our food price collection in Guatemala, Honduras and Nicaragua, we have used the following procedure, and this is how you could do it yourself:

Para obtener la información más precisa y detallada de las actualizaciones y cambios en los precios de alimentos de Centroamérica, recurrimos a dos grandes portales de datos:

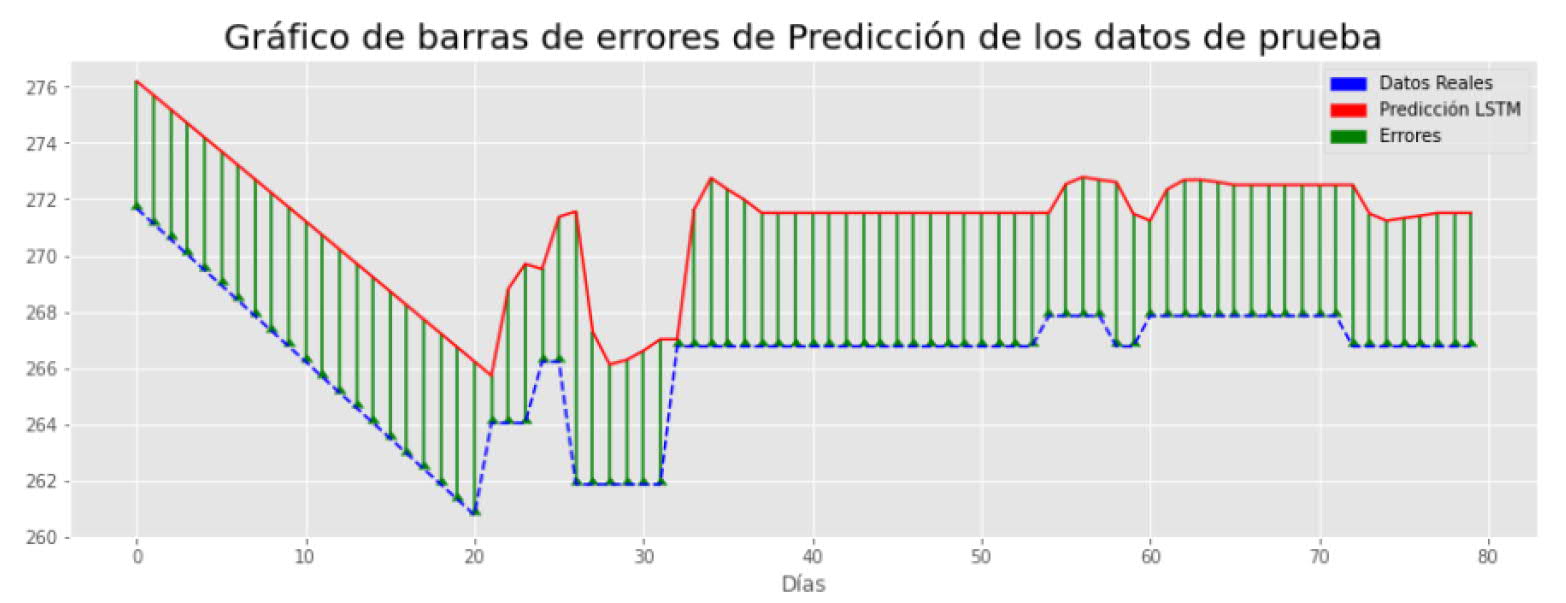

The reports of SIMPAH se publican semanalmente y desaparecen al publicar nuevos precios. Por ello, las labores de extracción y salvaguarda de los datos es de suma importancia, para monitorear, generando un histórico y detectando cambios abruptos de los precios, lo cual se define como una anomalía.

The Global Food Price Database, although it lacks weekly updates like the SIMPAH reports, it allows us to look back and evaluate food prices several months or even a year ago. This can be useful to evaluate the changes that a given food has undergone over time and anticipate possible food crises.

But how to monitor food prices in any country with Web Scraping? We explain it below.

The Web ScrapingWeb Scraping, or "Web Scraping", is a data collection technique that allows to extract information from websites in an automated way and can be used for different purposes, manually or automatically.

Before starting to make Web Scraping, it is important to take into account the privacy and usage policies of the websites to be accessed, as some may prohibit or limit this type of activity if misuse is detected.

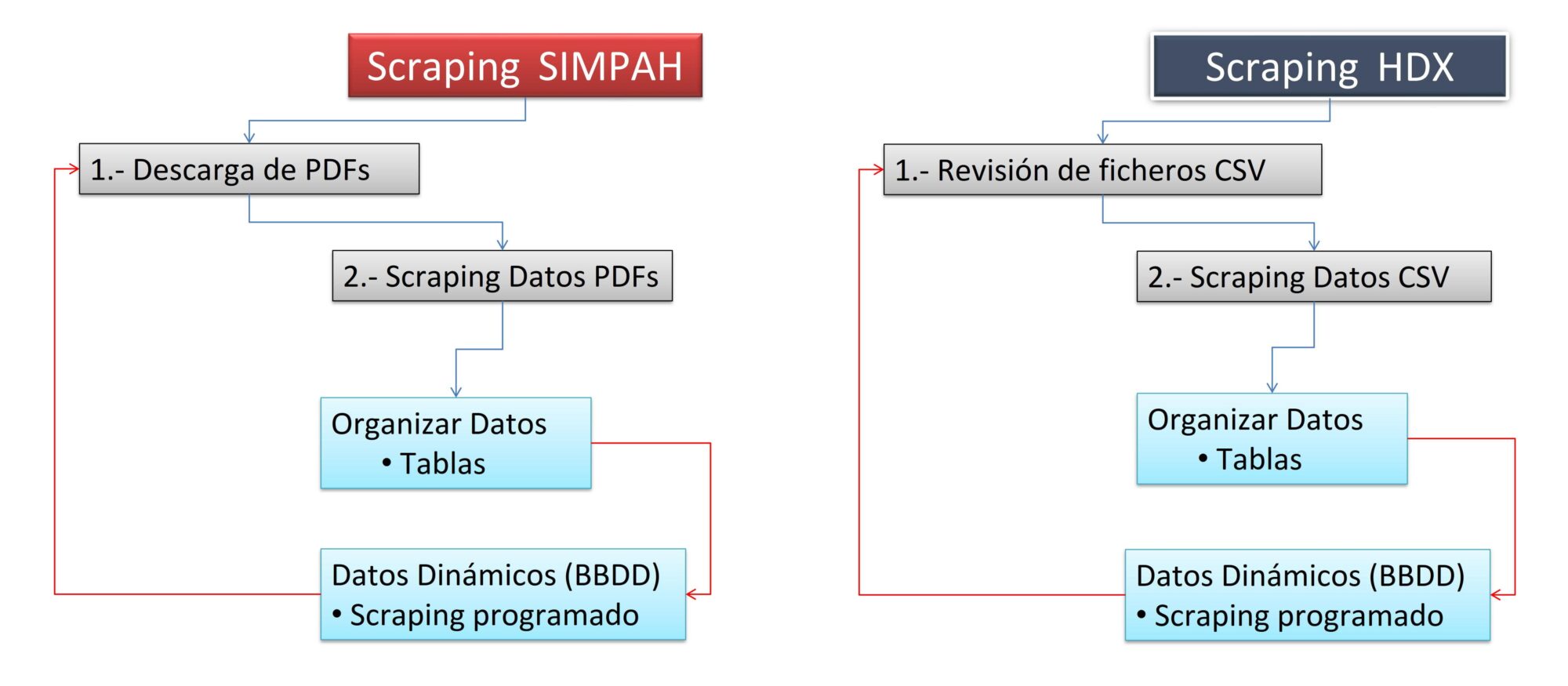

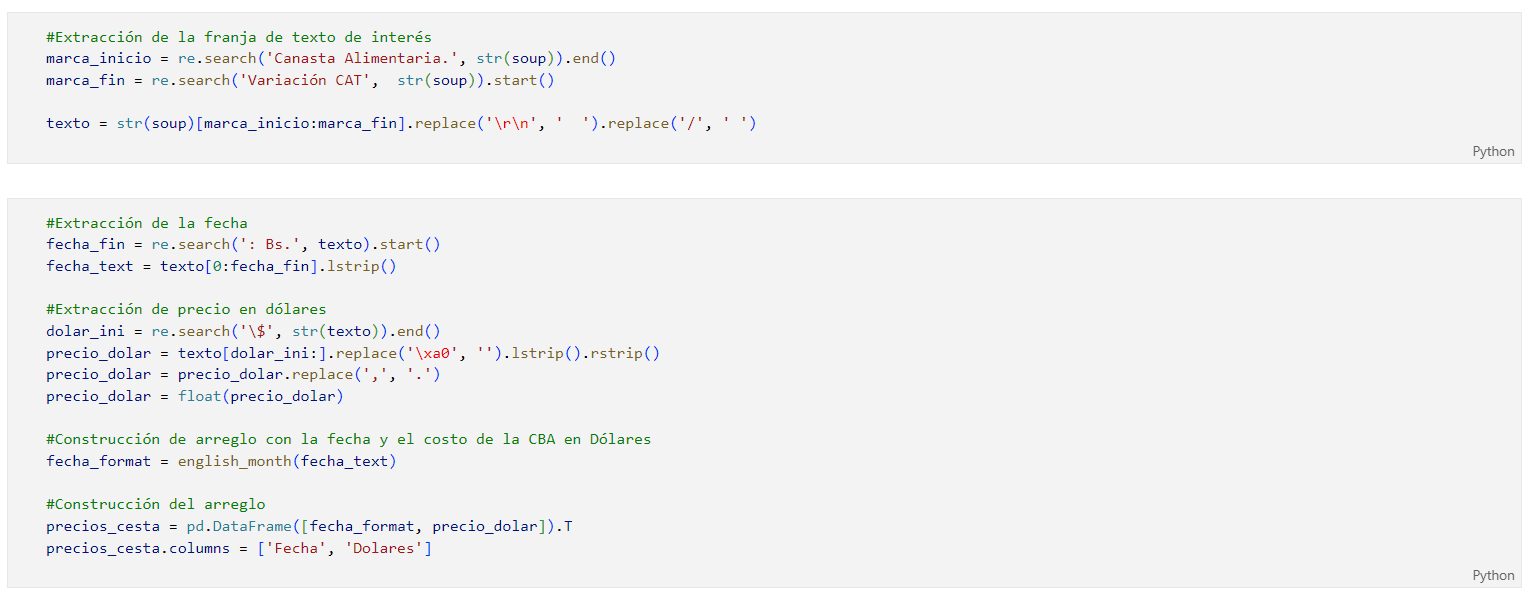

Through an extensive Python script we carry out the information extraction process from the 2 sources mentioned above and download the weekly food price reports in PDF format. Then, we use techniques Web Scraping combined with text mining to extract the prices of each document.

The data that has been extracted from the techniques of Web scraping and text mining, are organized in tables to be stored in a relational database and updated periodically, which guarantees the representativeness of the data over time from a statistical point of view.

At present, There is no other open database that is updated daily with food prices for Guatemala, Honduras and Nicaragua.

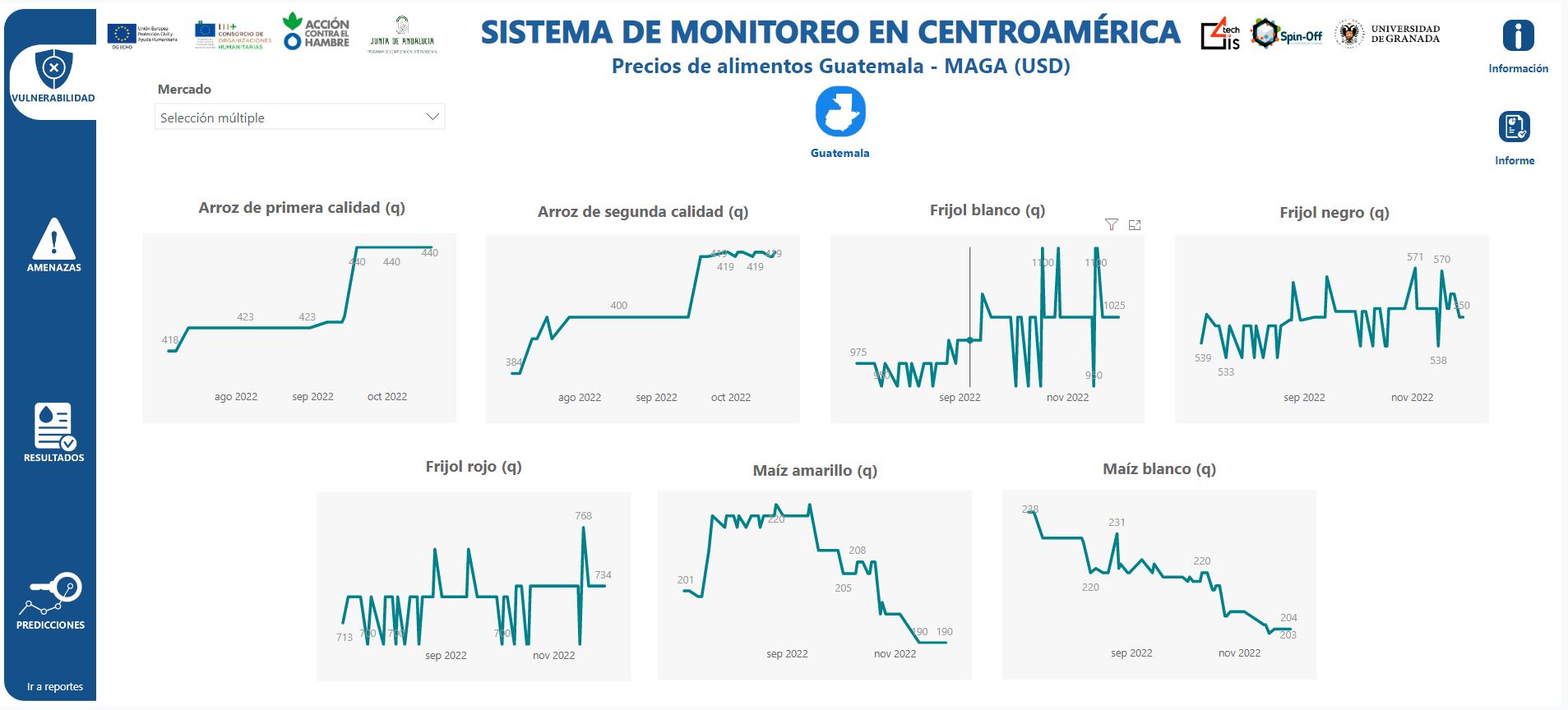

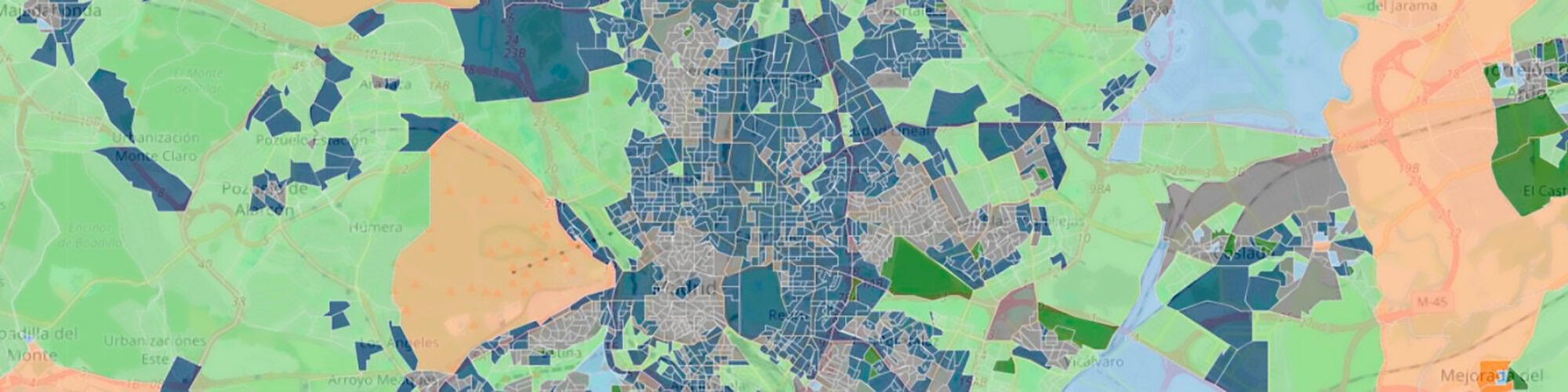

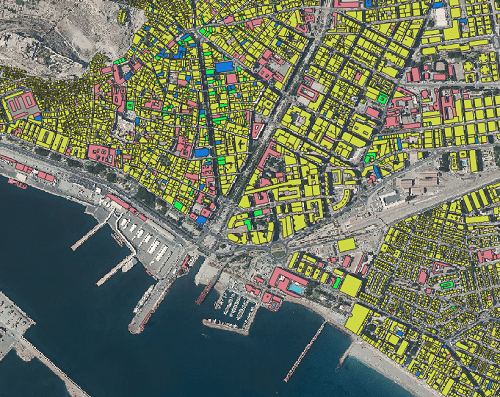

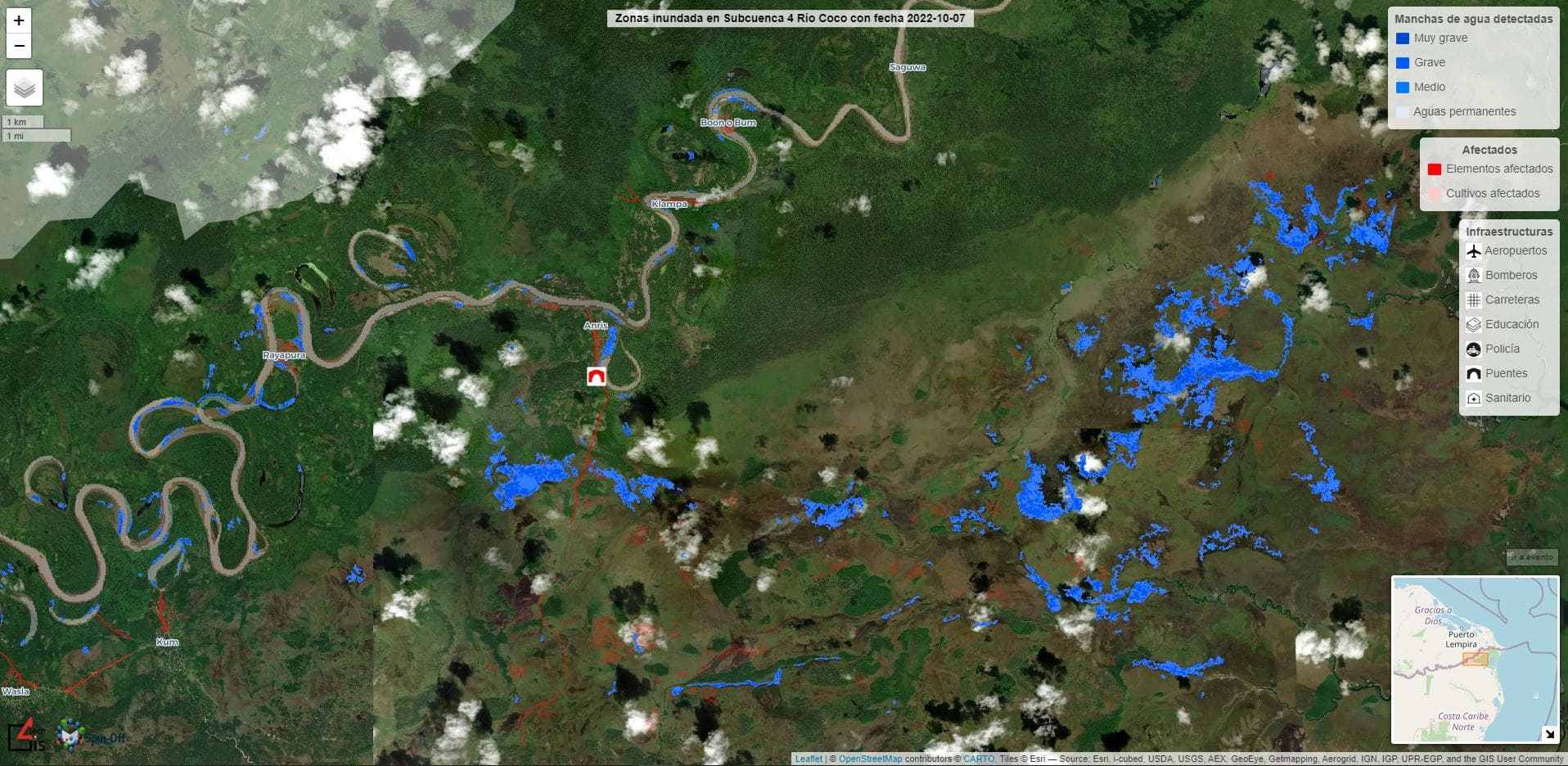

Following this process, the food prices of the 4 countries belonging to the Central American Dry Corridor, as well as their fluctuations, are constantly uploaded to the platform PREDISAN monitoring system for food and nutrition security in Central America, which we have developed together with Acción Contra El Hambre to assist NGOs and governmental entities in decision making and early detection of threats that may affect the purchasing power of consumers.

These price fluctuations are shown in section "Threats" and influence the SAN predictions shown in section "Predictions".

Correo electrónico: info@gis4tech.com